What is machine learning?

Machine Learning is a branch of artificial intelligence concerned with the design of algorithms that can automatically identify patterns or regularities in data. An example application is the problem of telling whether a person in an image is a “man” or a “woman”. To solve this task, we could manually write a computer program based on rules such as “if the subject has long hair, then it is likely to be a woman”. However, the number of rules needed to solve the problem this way would be too big to be manually designed and coded. Instead, machine learning techniques can use a dataset of images with associated labels “man” or “woman” to automatically identify patterns or regularities in images with the same label. These patterns can then be used to make predictions about new images with no associated labels.

What is model-based machine learning and the Bayesian framework?

Model-based machine learning is a simple and general recipe for designing machine learning algorithms that are specifically tailored to each new application. The central idea is to propose for each application a custom-made model that captures, in a computer-readable form, all our knowledge about the data generating process. Different algorithms are then used to combine the proposed model and the observed data with the objective to make predictions. The Bayesian framework represents a powerful implementation of this model-based approach. Within this framework, we assume that the observed data is produced by drawing samples from a probabilistic model. At the same time, any uncertainty about unknown variables in the probabilistic model is encoded using probability distributions. Bayes’ theorem is then used to combine these probability distributions with the observed data in a consistent way. The newly updated distributions can be finally used to make reliable predictions.

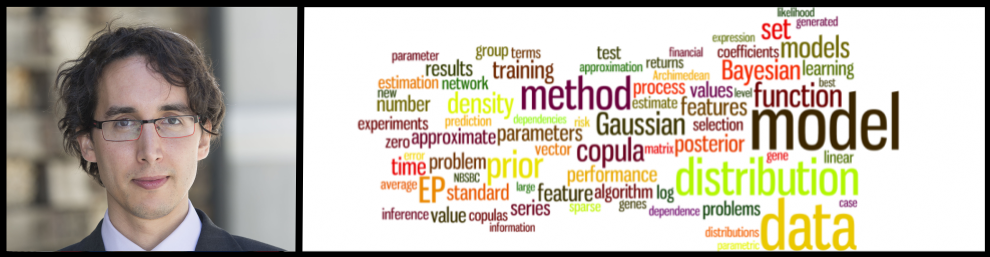

What is my research about?

My work in machine learning includes the design and implementation of scalable methods for approximate inference and the construction, evaluation and refinement of probabilistic models that successfully describe the statistical patterns present in the data. During the last years, I have designed new machine-learning methods for different real-world applications. A more up-to-date view of my research interests can be found by looking at my recent publications in my google scholar website.

Specific areas of research

- Deep generative models

- Bayesian deep learning

- Molecule generation and optimization

- Data-efficient machine learning

- Neural network compression

- Image compression

- Reinforcement learning and causal inference

- Bayesian optimization

- Graph neural networks

- Interpretable machine learning

- Meta-learning